What are Common Best Practices for Secure Software Development?

If security breaches emerge and get exploited by attackers, it would be unfair to blame only flaws in the source code. Network and server security plays an important and sometimes central role in protecting data and ensuring reliability. Therefore, attention should be paid to both the quality of software development and the protection of the infrastructure. What are common best practices for secure software development?

Challenges to Proactive Software Development Workflows

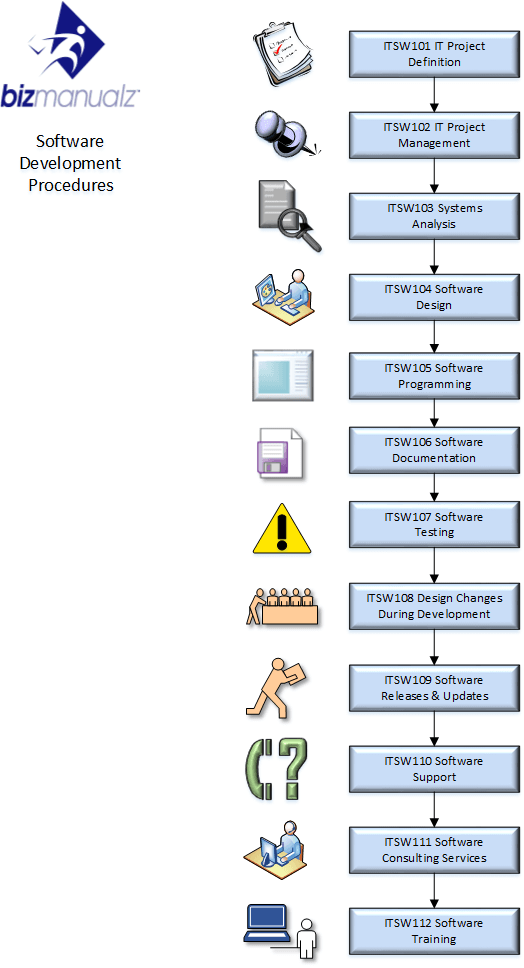

This Software Development Procedures Manual is designed to assist small to medium-sized software development firms in preparing a Standard Operating Procedures (SOP) Manual. It can be customized to fit your team and operations.

There are more than five hundred types of vulnerabilities found in software source code. Various injections, encryption problems, memory leaks, cross-site scripting, and many other problems can cause serious trouble when you are least prepared for it. Recently, software development has faced most of its challenges in the area of architecture. That is, the errors occur not while writing the code but while designing an application.

An example is a storage of plaintext passwords in sessions and databases, the ability to browse server directories outside the allowed perimeter, or lack of access control, etc.

Being proactive means acting now to prevent future problems. When it comes to software development, this means writing code and building architecture to avoid unpleasant surprises in the future.

It is the proactivity that often becomes a significant obstacle to effective interaction between IT and business because it entails:

- An increase in development time, as a result – increased costs.

- Additional costs and headaches are associated with hiring specialists who understand the principles of safe development and can follow them.

- Increased requirements for clarity and consistency of development processes.

Numerous companies consider these factors as redundant development. Their code writing starts to follow the wrong path: superficial technical specification > cursory examination and analysis > rapid development > quick testing (sometimes without this step at all) > release > patching.

It is a vicious circle. In such conditions, almost any task solved by adding new code to the product will require further support. In this case, it will be good luck if a large-scale incident does not happen between the next patch and the new problem (injection, data leakage, etc.)

Development Processes Allow No Compromises

Nowadays, business is increasingly based on the Information Technology (IT department). It makes sense to think of development as the plane on which the entire company flies. In this situation, would you like to seek compromises in flight safety? The answer is obvious. Unfortunately, in practice, not every company has the right vision and resources to create and support a normal proactive development process. And if resources become available, it is not truly clear where and how to start.

Safe Software Development From the Start

If you are just starting and are at the stage of shaping your workflows and architecture, whether completely from scratch or, possibly, initiating a new project on the existing infrastructure, the most critical factors for proactive security will be the development of business processes and key stages of any task lifecycle.

It does not matter what methodology you use here. It is important to comply with standard requirements, including:

Task Tracking

It is highly recommended to break business workflows into sub-projects (Jira, Basecamp, YouTrack). The point with the business workflow is crucial since with an increase in the volume of tasks, as a rule, the formulation and analysis often become superficial, and the stages of clarification or testing are entirely ignored. The correct lifecycle of a task will not allow a step to be completed without the participation of the responsible person.

The Lifecycle of a Task

For example, let us take a medium-sized fintech company with a development team of twelve people (Angular/Java). Here it may look like this: idea > analysis > collecting requirements (drafting technical specifications) > determination of the task executor and deadlines > approval > development > internal testing > external testing > pre-release testing > release > support.

If you have a question about where the proactivity in the security is here, the answer is quite simple: everything depends on the quality of the task setting. It is during the analysis and collection of information that the initial security requirements should be laid down.

Appointment of Responsible Persons for Each Stage

The goal is not to find a culprit but to clearly understand the tasks and results. That is, with a well-defined process for the implementation of a specific task, in addition to programmers, you will need a tester who is a business analyst and methodologist responsible for the task on the part of those who set it, a kind of local Product Owner. It is also desirable to have a technical writer, systems analyst, and an architect to build the right connections with the functionality already available.

Development Requirements

Development pattern, Code Style, unit test writing (before or after writing the main code), rules for working with version control tools (rules for branching and committing changes), code review, etc. Almost all of the above points are the strongholds of proactive security, and, unfortunately, only 20% – 30% of companies use them.

It is worth mentioning that the lion’s share of problems in the future can be avoided if there are detailed requirements for task setting, including technical analysis and a well-versed tech lead developer performing code reviews. The design and implementation of the code review process should be in accordance with the style established in the company and the requirements laid down by the technical director. There were cases when the company did not accept the code for merging, if tests did not cover it, or at least slightly did not correspond to the general style. Here the choice is yours whether to be flexible or strictly comply with all requirements.

Proactive Code Support

Talking about how to write a secure code can take a long time. And here, we need to talk not only about control of memory leaks, strong typing, access control, correct validation, and correct return of the data. Things like code style, commenting and documenting are vital for code support.

Mandatory test coverage of key functionality is very important. In practice, there are tasks, writing unit tests for the methods of which can really be a redundant development. For example, if your function returns true or false, depending on the type of the only argument, then such a method might not need testing. Meanwhile, methods of authorization, comparison, and processing of incoming data are critical to be covered with unit tests so that later, when building an application, there are no problems with key points, which will be paid less and less attention over time.

In fact, test writing is not too late to introduce at any stage of development, even if your software is already ten years old and tests cover not a single of its methods so far. Just start planning your development a little differently and introduce new requirements for technicians. Unless, of course, the issue of total refactoring is critical. In this case, you can refer to the considerations laid down in the previous part of the article.

Software Integration Testing

Integration testing is a more complex and comprehensive approach to inspecting the entire application by writing tests for a bundle of modules and classes, that is, checking the interaction of various software components. If you need to implement such functionality, you must understand that this area will be challenging to assign to the developers (as with unit testing.) It is more efficient to have dedicated specialists for testing your software for timely implementation, expansion, and change of libraries.

Functional Testing of Software

Functional testing, which in small projects is successfully replaced by manual testing, is an effective tool for finding a large number of vulnerabilities, as well as application errors. They are available as ready-made solutions widely used by testing departments throughout the world. (HPE UFT, Selenium, etc.)

Static and Dynamic Code Analysis

Extra tools that improve the quality of the code and, in some cases, significantly reduce the risk of vulnerabilities are static and dynamic code analyzers. These programs differ in the process of executing checks. They are aimed, in essence, at the same thing though. Both contribute to preventing and searching for already existing errors.

There are many differences between them, but the main thing is that static analyzers check the code before it is executed, while dynamic analyzers check the running program for resources being used, memory leaks, and a number of other vulnerabilities.

A static analyzer can be thought of as an automated Code Review process, with more efficient penetration (full analysis of the entire code, including dead code) but with a missing forecast. Such analysis may not always see a memory leak, for example, as well as with frequent cases of providing false positives and suspicious strings, the presence or absence of an error in which only the programmer can accurately determine.

For a developer who uses, for example, IDEA (or other modern IDEs) that everybody is used to, a static analyzer is already included in the basic functionality of the development environment. Recently, this module has significantly increased its performance and (for a specific developer) covers most of the code analysis process.

A significant difficulty may lie in the fact that the IDE analyzer is almost impossible to implement in the continuous integration process – CI/CD, as well as in the collection of department-specific analysis statistics. Such tasks require standalone solutions like the following: SonarQube, Checkstyle, PMD.

The use of dynamic analyzers (such as Valgrind, Acunetix, GitLab Ultimate, etc.) was historically much less common in practice, most likely due to redundancy for most applications to be developed. In web applications, dynamic analysis is entirely substituted by functional testing, web environment logs, and profiling coupled with debugging in the IDE. Dynamic analysis tools perform best in pipeline development of big multi-threaded applications in large quantities. The primary purpose of such analyzers is monitoring and controlling memory leaks in applications (especially for C/C++).

Secure Software Development

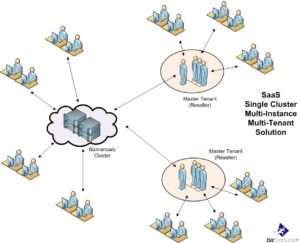

As part of comprehensive software security, of course, you need to ensure the protection of the network and databases hosted both on local servers and remotely (colocation or cloud). For example, when carrying out DDoS attacks, it is the configuration of the network environment, as well as the traffic filtering and firewall settings that matter for cybercriminals, rather than how the code is written.

Most threats, usually initiated from the outside but operating already inside your network (executing server commands, dropping files executed by your software), can also be prevented using the correct network configuration and additional tools like content and spam filters, geo-filtering, network antiviruses, and various intrusion prevention systems (IDS/IPS).

At the same time, we should not forget about threats that can come from within like bookmarks in the code, network keyloggers reading passwords, transferring a dev database dump to a flash drive, and taking it out of the company with a subsequent sale to competitors. The measures taken to defend against such attacks vary greatly depending on the company profile, culture, internal processes, and available resources.

Given the speed of technology development, as well as the growing number of external and internal security threats, it will not be superfluous to rethink the approaches to development processes and the main goals that the teams in the company pursue. All approaches, processes, and development infrastructure must be revised from a security point of view. Application speed, the convenience of its interfaces can be moved to the background when it comes to the leakage of personal or corporate information, as well as financial and reputational damage.

Redefining your company’s secure software development today will contribute best to addressing problems in the future. The use of potential security holes by attackers will cost more if proactive elimination and prevention of vulnerabilities in the code are done at the start of a project or task.

Author Bio: David Balaban is a computer security researcher with over 18 years of experience in malware analysis and antivirus software evaluation. David runs MacSecurity.net and Privacy-PC.com projects that present expert opinions on contemporary information security matters, including social engineering, malware, penetration testing, threat intelligence, online privacy, and white hat hacking. David has a strong malware troubleshooting background, with a recent focus on ransomware countermeasures.

Author Bio: David Balaban is a computer security researcher with over 18 years of experience in malware analysis and antivirus software evaluation. David runs MacSecurity.net and Privacy-PC.com projects that present expert opinions on contemporary information security matters, including social engineering, malware, penetration testing, threat intelligence, online privacy, and white hat hacking. David has a strong malware troubleshooting background, with a recent focus on ransomware countermeasures.

Leave a Reply